Post content

Soundtrack: choose an album between James Holden – The Inheritors or Caterina Barbieri – Ecstatic Computation… or better yet, first one then the other, they're both worth it.

–

In my free time I make music. It's been a constant in my life since I started playing piano and classical guitar back in the early 90s.

For a couple of years I even had the enormous luck of working as a session musician playing bass in Killer Barbies, which took me to concerts in Spain, Portugal, Germany, Switzerland and Japan.

Though the best part of it all was the people I got to know and, above all, starting a band with some friends.

I only took a break for a few years when I was totally absorbed by work. The rest of the time I've always dedicated part of my life to learning different instruments and making music or, at least, trying to.

While I'm not an expert musician: countless harmony concepts escape me and, regarding virtuosity with an instrument, the same thing happens to me as with different design specializations: there's such variety and I have such hunger to learn everything possible that I'm not able to go deep into just one thing. So I opt for horizontal rather than vertical knowledge, which gives me perspective instead of specialization.

I've gone from classical music (who hasn't played Beethoven's Für Elise when learning piano?) to more or less experimental electronic music.

Experimental, but not that much, not reaching what John Cage understands as experimental, which would be when you create without knowing what's going to happen (hence his use of the I Ching and randomization techniques).

Though under his premise there's very little music that can really be considered experimental.

In any case, I went from playing bass and guitar to playing synthesizers (especially modular ones) for a simple reason: lack of time.

Playing an instrument with a "classical" interface (I'm going to take the liberty here of including pads that involve fingerdrumming) requires psychomotor training and developing muscle memory to be able to play the right thing, with exact tempo and precise technique. This means hours of practice to be able to "play well" and then you have time for "your music".

However, when it comes to exploring sound and making music with synthesizers in a less traditional format, the psychomotor requirement is replaced by a purely intellectual exercise. To give some examples:

- Fingering is replaced by entering notes into a sequencer.

- Attack, decay, sustain and release parameters (ADSR) don't depend on the musician's strength and articulation, but on turning some potentiometers.

- Changing timbre doesn't require changing instruments, you just need to change the type of wave you're working with.

In summary, one interface or another requires you to engage differently with the instrument.

It would be quite complicated to establish the moment when electronic music originated. In any case, we'd have to differentiate synthesis from other techniques used in musique concrète that were precursors to sampling and granular synthesis.

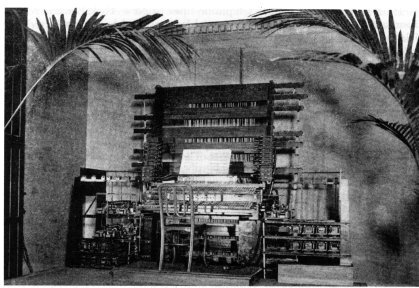

We could travel back to the Telharmonium, from the late 19th century and its streaming system.

But I'm not going to go back that far and I'll jump to the mid-60s, when the first commercial synthesizers were created.

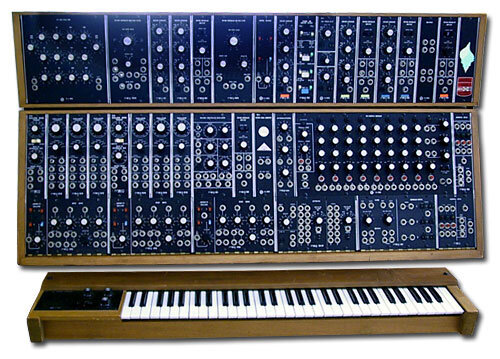

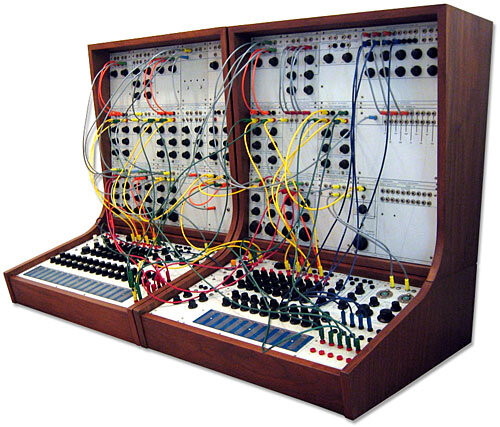

In parallel, on the East Coast of the United States emerged Moog, on the West Coast appeared Buchla.

Moog bet on creating an electronic instrument that would expand musicians' sonic palette, with an interface that combined the classic (a synthesizer piano keyboard) with the "modern" (a series of potentiometers that allowed sculpting sound). The keyboard allowed easily recognizable access to note input and the potentiometers, with clear language, were easily understandable: filter, attack, wave type, etc.

Meanwhile, on the West Coast we had Buchla who, possibly influenced by hippie culture and LSD, created a more experimental, more esoteric electronic instrument that, from the start, skipped certain "rules" that encouraged breaking away from traditional Western pop music. To give a couple of examples of how it broke conventions: it included a 10-step sequencer instead of an 8 or 16-step one (which would be the "logical" divisions of a 4/4 time signature). On the other hand, the note input interface had a playful and artistic point.

But let's go to practical examples.

The Doors in 1967 with a Moog:

And one of the first recordings with a Buchla: Silver Apples of the Moon. Which, in turn, is the first piece of electronic music commissioned directly by a record company:

Today we can see how synthesizer manufacturers have inherited and evolved the designs of Moog and Buchla.

In the wild world of modular synthesis we have the most cryptic interfaces from manufacturers like Make Noise or Mutable Instruments and more explicit ones like those from Doepfer.

Meanwhile other companies manufacture synthesizers with traditional keyboards and a series of more recognizable controls (Yamaha, Sequential, Prophet, Korg…).

We also have some middle ground with the Arturia Microfreak: traditional input but heir to Buchla's touch surface, along with a series of cryptic parameters.

I don't want to get too geeky with all this, so I'm not going to talk about the differences when approaching sound synthesis (subtractive, additive, FM, waveshaping, etc.),

It's obvious that a musician who wants a certain type of sonic palette will choose the synthesizer that best approaches it... but, from my point of view, the main thing is how one system or another drags you into its territory depending on its interface.

If Buchla gives you 10 sequencer steps you might end up with a 5/4 time signature (but probably not like Dave Brubeck's Take Five). This, in principle, will sound "different" to the ears of a listener who has been raised in traditional Western pop musical culture, but, after a few repetitions it will start to make sense in their head.

Because in the end it all comes down to how we people like what's repetitive, recursive, symmetric... no matter how strange a sequence of notes and its rhythmic pattern might be, the brain always ends up assimilating it if it's repeated the necessary number of times.

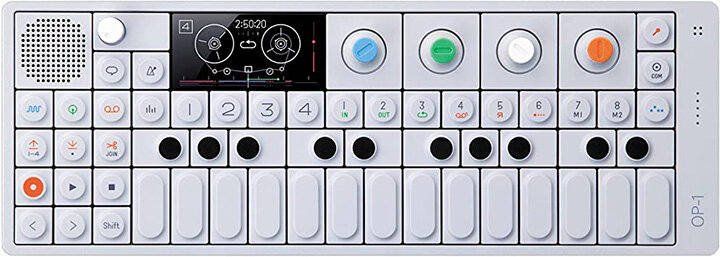

Another example of devices with totally different interfaces would be the Teenage Engineering OP-1 and the Elektron Octatrack. I know they're not totally comparable since they don't have the same capabilities as the Octatrack doesn't house a synthesis engine (though it could be emulated with single-cycle waves). But they are comparable in their condition as autonomous music production platforms and are perfect examples of how their interface design conditions the act of creation.

The Elektron machine has one of the hardest interfaces I've ever tried. It's not intuitive, the menus are dense and multi-layered, normally performing an action requires pressing a combination of keys. It's an interface whose power lies in the user's capacity for recall. On the other hand, once you overcome the learning curve, it becomes one of the deepest and most powerful machines you can find.

The OP-1, on the contrary, is totally friendly and possesses a playful character that aims to make the experience fun. It has no complex about dispensing with the usual lexicon and, instead, showing you a cow. The fact that the delay has a pitchshifting value of -5 stops worrying you, what's really important is that the cow digests faster or slower. We could talk about it having an interface based on recognition (if anyone is capable of recognizing an audio compression algorithm in an 8-bit animation of a boxer).

How the interface conditions the way of making music (beyond synthesizers vs. traditional instruments) is something I've been thinking about since many years ago when I switched from Logic to Ableton Live.

Logic and Ableton Live's Arrangement view show us the structure of the song we're creating in an analytical view. More or fewer sounds will be added, more or fewer arrangements, you'll have more or fewer tracks, but the linearity inherited from making recordings on a tape system is always there. Just like video recordings are structured linearly due to the inherent sequentiality of the medium.

While Ableton broke the analytical paradigm with its Session View to offer the user a synthetic approach to creating music: it puts an entire song at once or an entire DJ set at once in the user's view. Linearity has been broken, all the small fragments of your composition are within your reach, simultaneously and one click away from playing them.

Paradoxically I've gone back to Logic because Ableton's synthetic interface view drags me into wasting time creating small loops that result in repetitions and recursivity and makes me completely abandon the analytical approach.

I don't think Buchla is better than Moog, or that the OP-1 is better than the Octatrack, or that Ableton is better than Logic... what I do believe is that we should choose the interface that best suits our purposes.

And, of course, as designers we should design the interface that best suits users' purposes.

–

I couldn't close this text without making a special mention of monome, the creators of grid and arc. Authentic minimalist marvels heir to the purest Dieter Rams.